Phi-4-Vision-Reasoning

Compact, open-weight multimodal AI model excelling at vision-language tasks, math, and UI understanding. High accuracy, low latency.

What is Phi-4-Vision-Reasoning?

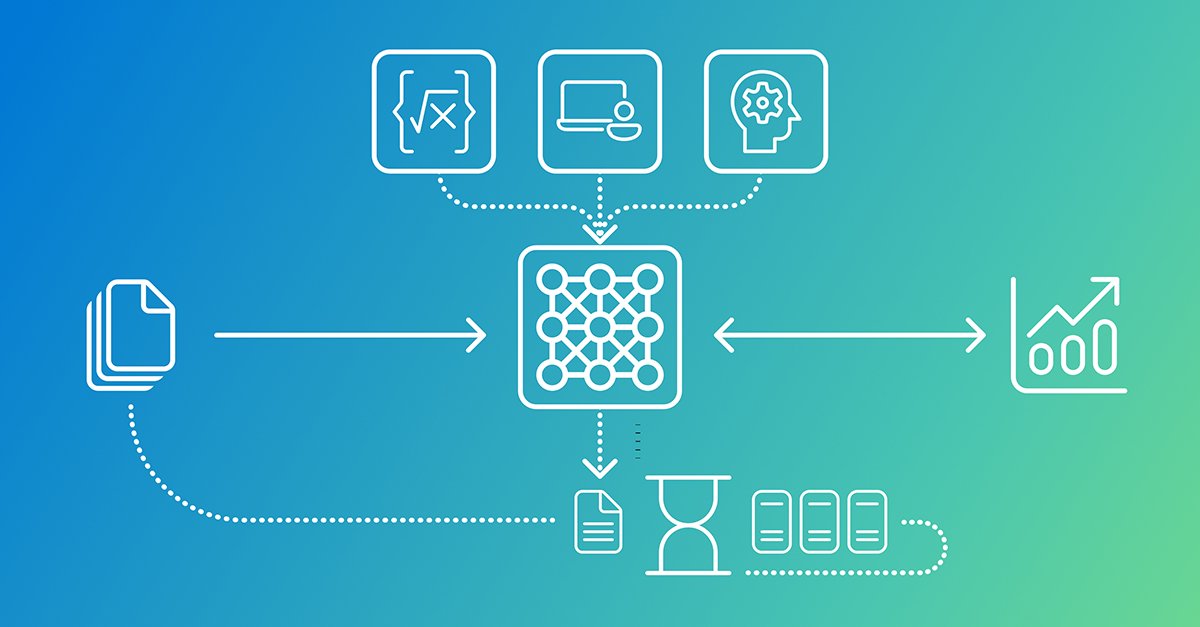

Phi-4-Vision-Reasoning is a groundbreaking 15 billion parameter, open-weight multimodal reasoning model developed by Microsoft. It represents a significant advancement in the field of artificial intelligence, offering a powerful yet remarkably compact solution for a wide array of vision-language tasks. This model is designed to bridge the gap between sophisticated reasoning capabilities and efficient deployment, making advanced AI more accessible and practical for various applications.

At its core, Phi-4-Vision-Reasoning is engineered to understand and process both visual and textual information, enabling natural interaction and complex problem-solving. It particularly shines in areas requiring deep analytical skills, such as mathematical and scientific reasoning, and excels at interpreting and interacting with graphical user interfaces on computer and mobile screens. The model's design prioritizes a balance between high performance and computational efficiency, challenging the trend of ever-larger models that often come with increased costs and latency. By leveraging careful architectural choices and rigorous data curation, Phi-4-Vision-Reasoning achieves competitive performance with significantly less computational resources compared to many other open-weight models.

Key Features

- Compact and Efficient: A 15 billion parameter model that offers high performance with reduced computational costs and latency, making it suitable for resource-constrained environments.

- Multimodal Reasoning: Seamlessly integrates and reasons over both visual and textual data for a wide range of tasks.

- Specialized Reasoning: Excels in complex domains like mathematics and science, providing accurate and insightful analysis.

- User Interface Understanding: Capable of understanding and grounding elements within computer and mobile screen interfaces.

- Broad Vision-Language Capabilities: Supports tasks such as image captioning, visual question answering, document reading, and sequence analysis.

- Open-Weight Model: Freely available for research and commercial use, fostering community innovation and accessibility.

- Pareto Frontier Performance: Achieves a superior trade-off between accuracy and compute costs compared to many existing models.

- Efficient Training: Trained on a curated dataset of 200 billion tokens, significantly less than many comparable models, demonstrating efficient data utilization.

How to Use Phi-4-Vision-Reasoning

Getting started with Phi-4-Vision-Reasoning is straightforward due to its open-weight nature and availability on popular platforms. Users can access the model through Microsoft Foundry, Hugging Face, and GitHub.

- Access the Model: Download the model weights from your preferred platform (Hugging Face or GitHub).

- Integration: Integrate the model into your existing AI workflows or applications. The model can be used for various vision-language tasks.

- Input Data: Provide the model with both image and text inputs. For UI understanding tasks, input screenshots or screen recordings.

- Task Execution: Utilize the model for tasks like image analysis, answering questions about visual content, solving math problems presented visually, or interpreting UI elements.

- Fine-tuning (Optional): For specialized applications, the model can be further fine-tuned on custom datasets to enhance performance in specific domains.

Detailed documentation and best practices for training and deployment are available alongside the model release, guiding users on optimizing its capabilities.

Use Cases

- Educational Tools: Assisting students with homework, particularly in math and science, by analyzing problems presented visually or textually.

- Accessibility Software: Helping visually impaired users understand images, documents, and computer interfaces by providing detailed descriptions and interactions.

- Automated Customer Support: Analyzing screenshots of user issues to provide faster and more accurate troubleshooting assistance.

- Content Moderation: Reviewing images and associated text for policy violations, especially in complex visual contexts.

- Robotics and Automation: Enabling robots to understand their environment through visual input and interact with control interfaces.

- Document Analysis: Extracting information from receipts, forms, and complex documents, including understanding layout and specific fields.

FAQ

-

Q: What makes Phi-4-Vision-Reasoning different from other multimodal models? A: Phi-4-Vision-Reasoning stands out due to its exceptional balance of performance and efficiency. It achieves competitive accuracy with significantly lower computational requirements and faster inference times compared to many larger or similarly sized models, particularly excelling in reasoning tasks and UI understanding.

-

Q: Is Phi-4-Vision-Reasoning suitable for real-time applications? A: Yes, its compact size and efficient design make it well-suited for real-time applications where low latency is crucial, such as interactive assistance or dynamic environment analysis.

-

Q: Can I use Phi-4-Vision-Reasoning for commercial purposes? A: Yes, Phi-4-Vision-Reasoning is an open-weight model, meaning it is available for both research and commercial use, encouraging broad adoption and innovation.

-

Q: What kind of hardware is recommended to run Phi-4-Vision-Reasoning? A: While specific requirements may vary based on usage, its efficient design allows it to run on modest hardware compared to larger models. Detailed hardware recommendations can be found in the model's documentation.

-

Q: How does the training data compare to other models? A: Phi-4-Vision-Reasoning was trained on 200 billion tokens of multimodal data, a substantially smaller dataset than many comparable models (e.g., over 1 trillion tokens for some). This efficient data curation is key to its performance and cost-effectiveness.

Alternatives

BookAI.chat

BookAI allows you to chat with your books using AI by simply providing the title and author.

skills-janitor

Audit, track usage, and compare your Claude Code skills with skills-janitor—nine focused slash commands and zero dependencies.

Struere

Struere is an AI-native operational system that replaces spreadsheet workflows with structured software—dashboards, alerts, and automations.

garden-md

Turn meeting transcripts into a structured, linked company wiki with local markdown and an HTML browser view. Sync from supported sources.

Falconer

Falconer is a self-updating knowledge platform for high-speed teams to write, share, and find reliable internal documentation and code context in one place.

AakarDev AI

AakarDev AI is a powerful platform that simplifies the development of AI applications with seamless vector database integration, enabling rapid deployment and scalability.