Lamatic.ai

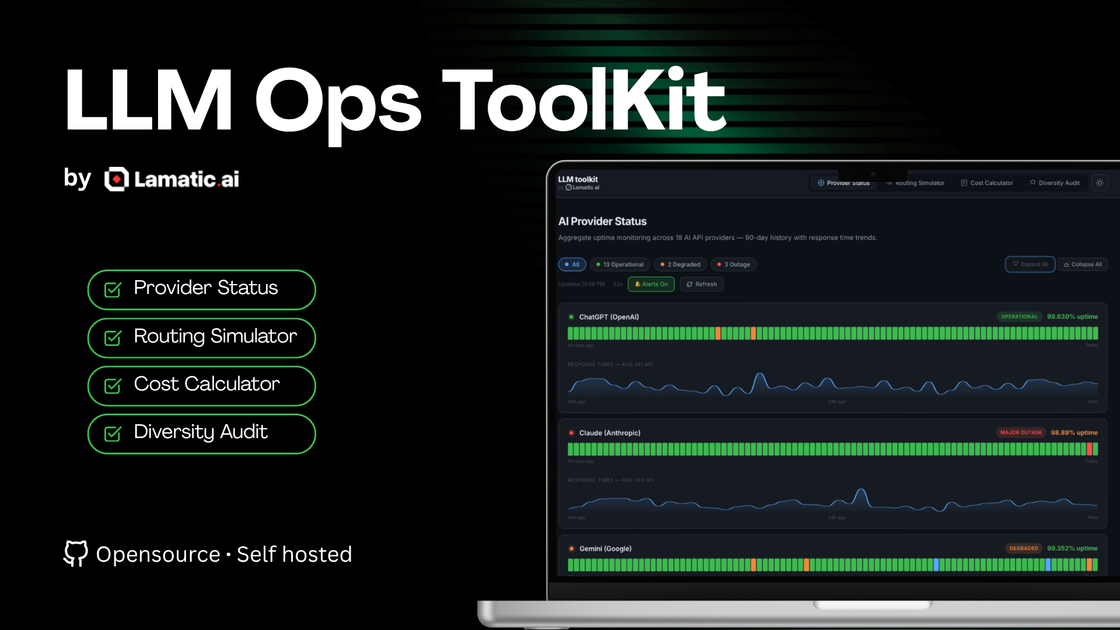

Lamatic.ai LLM Ops Toolkit for real-time uptime monitoring across 18 AI API providers, true TCO cost analysis, routing simulator, and maturity audit.

What is Lamatic.ai?

Lamatic.ai’s LLM Ops Toolkit helps teams evaluate and operate multiple LLM providers with planning and operational visibility in mind. It combines tooling for cost estimation, model routing simulation, provider uptime monitoring, and an operations maturity assessment.

The core purpose is to support decision-making around which models to use, how to route requests across providers, and how to quantify operational “hidden costs” (such as time spent on model operations) alongside observed provider reliability.

Key Features

- LLM cost calculator and true cost analysis: Estimates monthly and annual costs using inputs like number of providers, monthly API spend, engineering team sizing, time allocated to model operations, and a TCO multiplier to compute a “true” monthly cost and hidden cost.

- Routing simulator with strategy comparison: Simulates request routing across different models using parameters such as request volume, request complexity, and a routing strategy (including cost-optimized and quality-first concepts) to estimate cost savings and quality/latency outcomes.

- Model diversity and maturity audit: Assesses LLM ops maturity using a set of targeted questions (shown as a maturity assessment with recommendations) to guide next steps.

- Capability radar (model comparison view): Displays a capability radar style comparison for multiple listed models, including cost per 1K tokens, quality score, and latency.

- Aggregate uptime monitoring across AI API providers: Tracks provider status with a 90-day history and response-time trends, including operational states such as operational, degraded, and outage; includes outage notification controls (as indicated by an alert icon).

How to Use Lamatic.ai

- Start with your baseline assumptions in the cost calculator: set the number of LLM providers, monthly API spend, engineering team size, and the percentage of engineering time spent on model operations to generate a “true cost” and hidden cost estimate.

- Run a routing simulation: choose a request volume and complexity and then compare routing strategy outcomes (for example, routing that prioritizes cost versus routing that prioritizes quality) to quantify potential savings and expected quality/latency shifts.

- Assess provider reliability using the uptime monitoring view to review the last 90 days of uptime and response-time trends across the supported set of providers.

- Complete the maturity audit by answering the targeted questions to surface recommended next steps and identify where your current LLM operations process fits on the maturity spectrum.

Use Cases

- Planning multi-provider LLM budgets: A team can use the cost calculator to convert raw API billing into a broader “true monthly cost” view that includes engineering time and an estimated TCO multiplier, helping justify operational investments.

- Evaluating whether routing can reduce spend: By simulating a routing strategy where a portion of traffic goes to cheaper models, an engineering team can estimate potential annual savings and compare conservative versus optimistic routing allocations.

- Comparing models under workload assumptions: Teams can use the capability radar and routing simulator together to compare listed models by token cost and latency, then validate how routing impacts average quality and latency under a given request profile.

- Operational risk review for provider performance: Using aggregate uptime monitoring with a 90-day history, teams can review response-time trends and outages/degradations to inform provider strategy or incident planning.

- Gap analysis for LLM Ops maturity: Organizations that are new to LLMOps or already have tooling can use the maturity audit to structure improvements using the questionnaire-driven capability assessment.

FAQ

-

What metrics does the toolkit calculate for cost? The page describes a true cost analysis that combines monthly API spend with engineering time on model operations and a TCO multiplier, producing a “true monthly cost” and hidden cost figures.

-

Can I simulate routing across multiple models? Yes. The routing simulator is designed to visualize how routing distributes requests across models and to estimate cost savings and routing outcomes.

-

Which providers are covered by uptime monitoring? The uptime monitoring section states it covers 18 AI API providers and provides a 90-day history with response time trends.

-

What does the maturity audit measure? The maturity audit is presented as an assessment using 10 targeted questions and generates ad hoc recommendations and a capability radar-style view.

Alternatives

- LLM cost and token accounting tools (cost dashboards): These focus on API usage and token cost tracking, but typically don’t include the same combination of true TCO modeling, routing simulation, provider uptime history, and maturity auditing.

- General API uptime/latency monitoring platforms: Monitoring tools can track uptime and response times for endpoints, but may require more setup to model LLM routing decisions and cost/quality trade-offs across multiple model providers.

- Custom routing logic with internal analytics: Teams can build routing and evaluation in-house using their telemetry; this can replicate parts of the simulator but usually requires more engineering effort to build cost/quality/latency comparisons and historical provider status views.

Alternatives

ClawTick

ClawTick is a CLI-first AI agent automation platform for scheduling webhook tasks on cron with monitoring, alerts, retries, and execution logs.

OpenFlags

OpenFlags is an open source, self-hosted feature flag system with a control plane and typed SDKs for progressive delivery and safe rollouts.

skills-janitor

Audit, track usage, and compare your Claude Code skills with skills-janitor—nine focused slash commands and zero dependencies.

BenchSpan

BenchSpan runs AI agent benchmarks in parallel, captures scores and failures in run history, and uses commit-tagged executions to improve reproducibility.

Rectify

Rectify is an all-in-one operations platform for SaaS, combining monitoring, analytics, support, roadmaps, changelogs, and agent management—via conversation.

PromptScout

PromptScout tracks how your brand is mentioned, which competitors are recommended, and what sources are cited in AI answers—plus website audits.