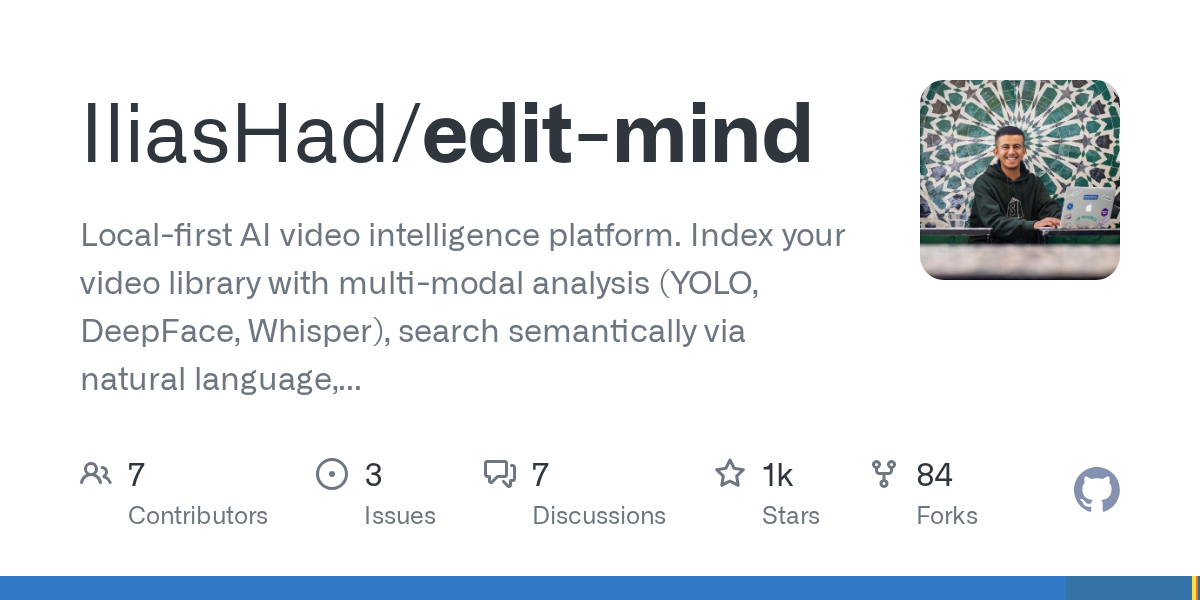

edit-mind

edit-mind is a local-first AI video intelligence platform that indexes videos with transcription, face/object/text analysis, and semantic embeddings for natural language search.

What is Edit Mind?

edit-mind is a local-first AI video intelligence platform that indexes videos with transcription, face/object/text analysis, and semantic embeddings for natural language search. It processes videos to extract metadata such as transcription, detected objects, and faces, then stores that information for semantic querying.

The core purpose is to turn an existing set of videos into searchable knowledge—covering whole videos and, where applicable, specific scenes—while running via Docker so it can work on any computer or server that has Docker installed.

Key Features

- Background video indexing service: Watches for new video files and queues them for AI-powered analysis so your library stays up to date.

- Multi-model video analysis: Extracts metadata including face recognition, transcription, object & text detection, and scene-level analysis.

- Vector-based semantic search (ChromaDB): Supports natural-language search over video content using embeddings stored in ChromaDB.

- Local execution with Docker: Runs as containerized services using Docker Compose to keep setup modular and deployable on different machines.

- Model options for AI/NLP processing: Uses Whisper for transcription and supports choosing between Google Gemini or locally via Ollama (per configuration).

How to Use Edit Mind

- Install and run Docker Desktop (or ensure Docker is available on your server).

- Clone the repository and run through the provided setup flow.

- Expose your media folder to Docker by configuring Docker Desktop file sharing (macOS/Windows). On Linux, file sharing is typically enabled by default.

- Create environment files: Download/copy

.env.exampleand.env.system.exampleinto.envand.env.system, then configure required settings. - Set the video folder path (

HOST_MEDIA_PATH) and choose your AI model option:- Ollama: set

USE_OLLAMA_MODEL, plusOLLAMA_HOST,OLLAMA_PORT, andOLLAMA_MODEL(and runollama serve/ pull the model first). - Gemini: set

USE_GEMINIand provideGEMINI_API_KEY.

- Ollama: set

- Generate security keys: Set

ENCRYPTION_KEYandSESSION_SECRETusing the commands shown in the setup guide. - Start the Docker Compose stack (the repo provides both a standard compose file and a CUDA-oriented one for NVIDIA GPUs).

Use Cases

- Search by spoken words: Query your library with phrases you remember from audio, relying on transcription extracted from videos.

- Find videos containing specific objects or on-screen text: Use natural-language queries tied to object & text detection outputs generated during indexing.

- Locate scenes with known faces: Use face recognition-derived metadata to narrow results to videos or scenes where people appear.

- Curate and navigate large personal archives: Automatically keep metadata refreshed as new video files are added, then search without manual tagging.

- Run on a privacy-focused local environment: Index and search entirely on your own machine (or server) through Docker rather than requiring a hosted workflow.

FAQ

-

Is Edit Mind ready for production? The repository states it is in active development and not yet production-ready, with expectations for incomplete features and occasional bugs.

-

Does Edit Mind require Docker? Yes. The setup instructions specify Docker Compose to run everything in containers.

-

What AI options are supported for processing? The documentation mentions Whisper for transcription and supports either Google Gemini or Ollama for NLP-related tasks, selected via environment variables.

-

How do I connect the system to my video files? Configure Docker to access your media folder (Docker Desktop file sharing on macOS/Windows) and set

HOST_MEDIA_PATHin the.envfile to match that folder path. -

Where does semantic search data live? The stack includes ChromaDB for vector-based semantic search and PostgreSQL (via Prisma ORM) as the relational database.

Alternatives

- Cloud-hosted video search platforms: These typically centralize processing on hosted infrastructure. Compared to edit-mind’s local-first Docker approach, they may trade privacy/control for simpler setup.

- Desktop media management tools with manual tagging: Some tools let you organize videos via user-entered tags and metadata. They differ in that they don’t perform AI-based transcription/object/face extraction for semantic search.

- Self-hosted transcription + search pipelines: You can build a workflow that transcribes video and then indexes text for search. This would differ from edit-mind by focusing more narrowly on audio/text rather than multi-modal analysis (faces/objects/scenes) and integrated semantic querying.

- General vector database search apps: You could use embeddings and a vector database to implement semantic search, but you’d need to handle video ingestion, multi-modal extraction, and scene-level linkage yourself—work that edit-mind packages in its pipeline.

Alternatives

Wikiwand

AI-driven wiki aggregator created to enhance user experience on Wikipedia by streamlining knowledge consumption.

Struere

Struere is an AI-native operational system that replaces spreadsheet workflows with structured software—dashboards, alerts, and automations.

garden-md

Turn meeting transcripts into a structured, linked company wiki with local markdown and an HTML browser view. Sync from supported sources.

Falconer

Falconer is a self-updating knowledge platform for high-speed teams to write, share, and find reliable internal documentation and code context in one place.

ClayHog

ClayHog tracks AI Search Visibility & GEO—see what ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews say about your brand, incl. citations and sentiment.

Grok AI Assistant

Grok is a free AI assistant developed by xAI, engineered to prioritize truth and objectivity while offering advanced capabilities like real-time information access and image generation.