Visla AI Director Mode

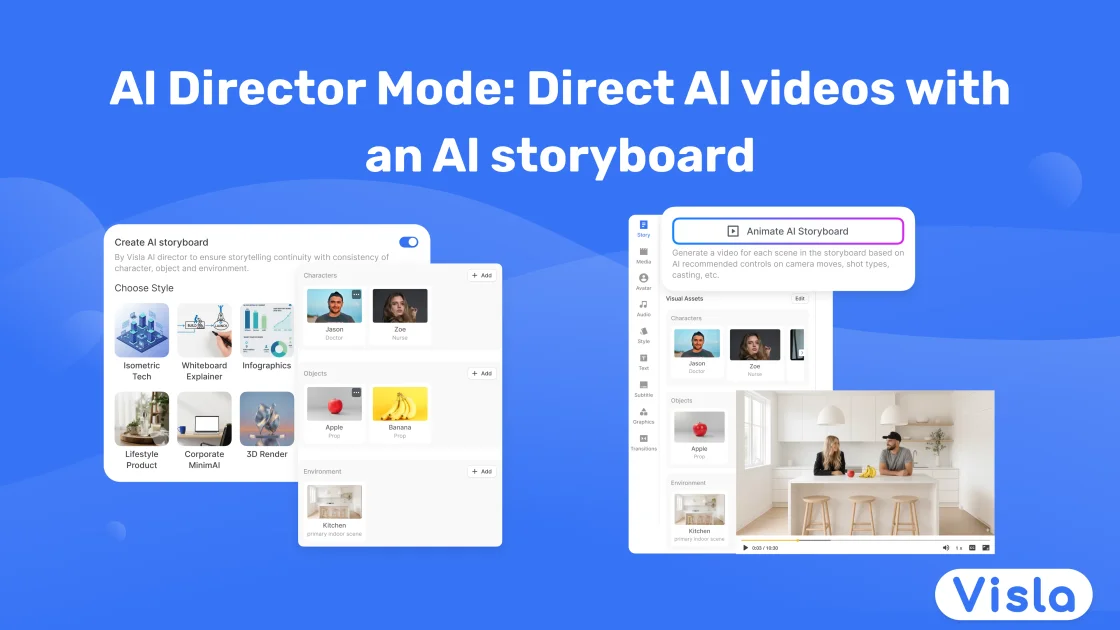

Visla AI Director Mode helps you plan and direct AI videos with an AI storyboard first—control characters, products, scenes, then convert approved shots to clips.

What is Visla?

Visla’s AI Director Mode is a way to generate AI videos using a scene-by-scene plan built from an AI storyboard. Instead of producing motion immediately, you create and approve storyboard images first, then selectively convert scenes into full AI video clips.

Its core purpose is to give you more control over what appears in each scene—such as characters, objects, and environments—while keeping continuity and branding consistent from shot to shot.

Key Features

- AI storyboard first workflow: Inputs are turned into a structured storyboard (beginning, middle, end) before you generate motion, so you can review and adjust plans early.

- Scene-level direction controls: You can set how the video feels using direction choices like pace and voiceover style, and you can guide the tone of the final result.

- Character control and consistency: You can generate characters with AI or upload references (e.g., real photos, team headshots, or a brand mascot) and then keep those characters consistent across scenes.

- Object and asset placement: You can generate or upload objects such as product photos, packaging shots, app screenshots, icons, and logo lockups, and reuse them across scenes so the visuals stay coherent.

- Environment selection for context: Choose environments (e.g., offices, classrooms, studios, homes, outdoor scenes, cinematic backdrops, or abstract branded spaces) so each scene is placed in a consistent “world.”

- Selective storyboard-to-video rendering: You decide which storyboard images remain as images and which scenes get converted into AI-generated video clips.

- Editing after generation: After you generate the clips, you can polish the final output using Visla’s editing tools (the source describes editing tools without detailing specific functions).

How to Use Visla

- Start a new project by choosing Create Video and provide your starting input (such as a script, webpage, PDF, slide deck, raw footage, images, or a rough idea).

- Pick a visual style (e.g., photorealistic, cinematic, 3D render, infographic, flat vector, UGC/social, or other available styles) so your storyboard scenes share consistent lighting, composition, and look.

- Add or generate characters and objects you want to appear in the video (use AI generation or upload assets like headshots, product photos, app screenshots, icons, or logo lockups).

- Choose environments that match the setting for the video, and ensure the inputs blend into each storyboard scene.

- Review the generated AI storyboard and tweak any scenes that don’t match your direction.

- Convert storyboard scenes into AI video clips selectively, then use Visla’s editing tools to refine the final video.

Use Cases

- Explainer videos with controlled characters and props: Create an AI storyboard from a script and choose consistent characters and on-screen objects so the narrative stays readable scene to scene.

- Training or onboarding content: Use uploaded headshots/photos for trainees or presenters, select a repeatable environment, and convert only the most relevant storyboard moments into motion.

- Product marketing videos that keep brand assets consistent: Lock in product images, packaging shots, and logo lockups so branding appears naturally in the scenes rather than changing across clips.

- Storyboard planning from multiple input types: Turn a webpage, PDF, slide deck, or even a rough idea into a beginning-to-end visual plan, then direct pace and voiceover style before motion generation.

- Reducing revisions by approving the plan before rendering: Review storyboard images first to make early decisions, then generate motion only for scenes where you want full video output.

FAQ

What inputs can I start with?

The page states you can start with a script, webpage, PDF, slide deck, raw footage, images, or a rough idea.

Do I have to generate full video clips for every scene?

No. By default, scenes start as AI-generated storyboard images. You can keep scenes as images or turn them into full AI-generated video clips.

How does AI Director Mode help with consistency?

The page explains that AI Director Mode is designed to keep characters, objects, and environments steady so you don’t get scene-to-scene drift such as “changing actors.” Branding assets are also placed so they remain consistent across scenes.

Can I control the look of the video?

Yes. You select a visual style (such as photorealistic, cinematic, 3D render, infographic, flat vector, or UGC/social) which sets rules for lighting, composition, and overall vibe across storyboard scenes.

What does “direction” include?

The page specifically mentions direction choices like pace and voiceover style, along with setting the exact characters, objects, and environments you want on screen.

Alternatives

- Generic AI video generators (text-to-video or prompt-based): These typically prioritize immediate clip generation from prompts; the workflow may be less structured than storyboard-first planning.

- Video editing tools with templated motion: Useful if you already have assets and want deterministic edits, but you won’t get the same AI storyboard planning described here.

- AI image-to-video tools: These can help convert images into motion, but may not provide the same scene-by-scene continuity controls for characters, objects, and environments.

- Storyboarding tools or animation previsualization software: These support manual or semi-automated planning, but the page’s value proposition is specifically about using AI-generated storyboards to direct subsequent video generation.

Alternatives

VIDEOAI.ME

VIDEOAI.ME is an AI video generator to create studio-quality, publish-ready videos with realistic AI actors and voiceovers from text or a selfie.

艺映AI

艺映AI is a free AI video generation platform focused on transforming text and images into high-quality dynamic videos.

Plask

Plask is an AI motion capture and 3D animation tool that turns uploaded video into character motion, with video renders or 3D asset export.

讯飞绘镜 (iFlytek Huijing)

讯飞绘镜 (iFlytek Huijing) is an AI video creation platform that transforms creative ideas into scripts, storyboard images, and dynamic videos quickly and efficiently.

Topview AI

TopView is an AI Video Agent that transforms images into high-converting user-generated content (UGC) videos instantly, enhancing e-commerce and business growth.

MIRA vision

MIRA vision is an AI medical diagnostics system using patented synthetic pathology analysis for precise, rapid results in clinical workflows.