Discover tools that power great ideas

Odyssey is an AI lab developing general-purpose world models for interactive simulation, multimodal learning, and multi-agent environments.

Gemma 4 12B is a multimodal AI model from Google DeepMind for local laptop inference, with vision, audio, and text in one architecture.

Command A+ is Cohere’s open-source enterprise language model for complex reasoning, multimodal and multilingual workflows, and tool use, with private deployment support.

Perceptron Mk1 is a closed-source multimodal model for video understanding, image reasoning, and robotics workflows with structured visual outputs.

MiniMax M3 is an open-weight AI model for coding and agentic workflows, with native multimodal understanding and a 1M-token context window.

MiniCPM-V is an open-source multimodal LLM series for vision-language understanding from image, video, and text, built for edge mobile deployment.

Adoptly helps SaaS teams create, target, and measure in-app announcements and product update widgets like modals, banners, and hints.

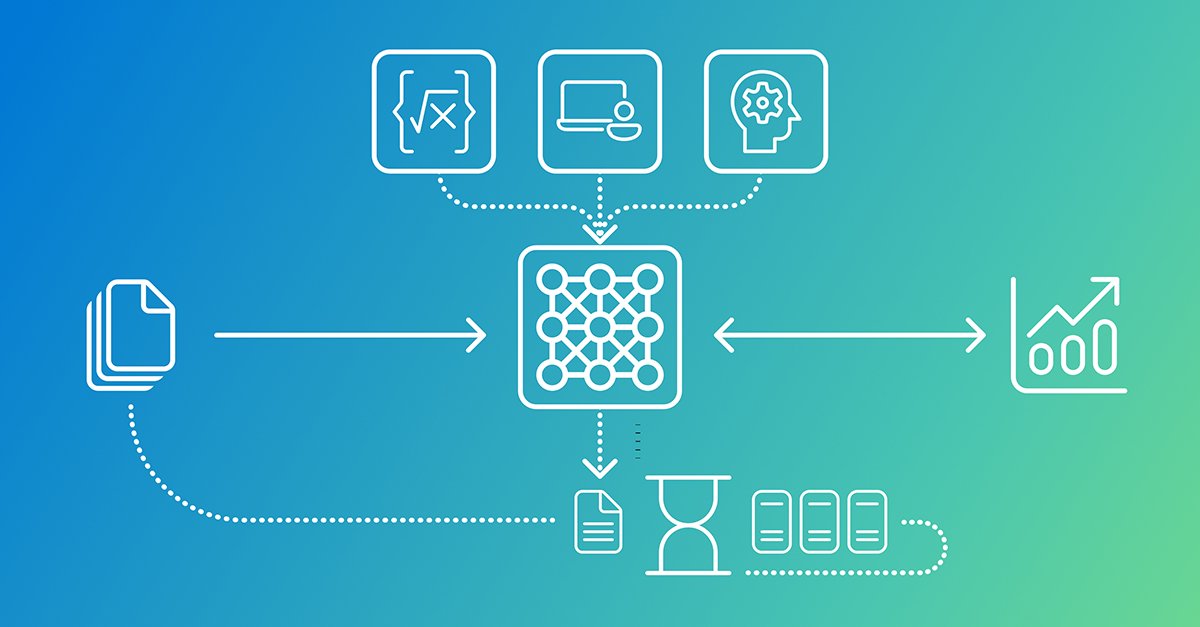

TwelveLabs delivers an enterprise video intelligence platform and API that turns raw video into searchable, AI-ready data with multimodal understanding.

Reka Edge is a locally deployable multimodal AI model for real-time video analysis, delivering grounded outputs like object bounding boxes.

Gemma 4 is an open model family for advanced reasoning and agentic workflows, with multiple sizes for local and edge multimodal use.

MulmoChat is a research prototype for multimodal AI chat on a canvas, combining conversational text with rich visual and interactive content, plus APIs and ComfyUI.

UNI-1, Luma’s multimodal reasoning model, generates pixels and supports directable, reference-guided image creation for scene completion and transformations.

Agentset is an open-source platform for production-ready RAG apps with reliable search and Q&A, including citations, multimodal ingestion, and metadata filtering.

MiniCPM-o 4_5 is a 9B omni-modal model for full-duplex live interaction with vision, speech, and text—real-time concurrent streaming output.

Gemini Embedding 2 maps text, images, video, audio and documents into one embedding space for multimodal retrieval and classification.

Compact, open-weight multimodal AI model excelling at vision-language tasks, math, and UI understanding. High accuracy, low latency.

Build low-latency, multimodal voice and realtime audio experiences with OpenAI Realtime API—browser voice agents and realtime transcription.

GLM-5 is the next-generation large language model from Zhipu AI, designed for superior reasoning, coding, and multimodal capabilities, setting a new standard for open-source LLMs.

Seedance 2.0 is a unified multimodal audio-video joint generation architecture supporting text, image, audio, and video inputs for comprehensive content reference and editing.

MiniCPM-o 4.5 is a highly capable multimodal AI model designed for vision, speech, and full-duplex live streaming, offering advanced visual understanding, speech synthesis, and real-time interactive capabilities in a compact 9B parameter architecture.